Inside Deep Zoom –

Part I: Multiscale Imaging

In March 2007 Blaise Aguera y Arcas presented Seadragon & Photosynth at TED that created quite some buzz around the web. About a year later, in March 2008, Microsoft released Deep Zoom (formerly Seadragon) as a «killer feature» of their Silverlight 2 (Beta) launch at Mix08. Following this event, there was quite some back and forth in the blogosphere (damn, I hate that word) about the true innovation behind Microsoft's Deep Zoom.

Today, I don't want to get into the same kind of discussion but rather start a series that will give you a «behind the scenes» of Microsoft's Deep Zoom and similar technologies.

This first part of «Inside Deep Zoom» introduces the main ideas & concepts behind Deep Zoom. In part two, I'll talk about some of the mathematics involved and finally, part three will feature a discussion of the possibilities of this kind of technology and a demo of something you probably haven't seen yet.

Background

As part of my awesome internship at Zoomorama in Paris, I was working on some amazing things (of which you'll hopefully hear soon) and in my spare time, I've decided to have a closer look at Deep Zoom (formerly Seadragon.) This is when I did a lot of research around this topic and where I had the idea for this series in which I wanted to share my knowledge.

Introduction

Let's begin with a quote from Blaise Aguera y Arcas demo of Seadragon at the TED conference[1]:

…the only thing that ought to limit the performance of a system like this one is the number of pixels on your screen at any given moment.

What is this supposed to mean? See, I have a 24" screen with a maximum resolution of 1920 x 1200 pixels. Now let's take a photo from my digital camera which shoots at 12 megapixel. The photo's size is typically 3872 x 2592 pixels. When I get the photo onto my computer, I roughly end with something that looks like this:

No matter how I put it, I'll never be able to see the entire 12 megapixel photo at 100% magnification on my 2.3 megapixel screen. Although this might seem obvious, let's take the time and look at it from another angle: With this in mind we don't care anymore if an image has 10 megapixel (that is 10'000'000 pixels) or 10 gigapixel (10'000'000'000 pixels) since the number of pixels we can see at any moment is limited by the resolution of our screen. This again means, looking at a 10 megapixel image and 10 gigapixel image on the same computer screen should have the same performance. The same should hold for looking at the same two images on a mobile device such as the iPhone. However, important to note is that with reference to the quote above we might experience a performance difference between the two devices since they differ in the number of pixels they can display.

So how do we manage to make the performance of displaying image data independent of its resolution? This is where the concept of an image pyramid steps in.

The Image Pyramid

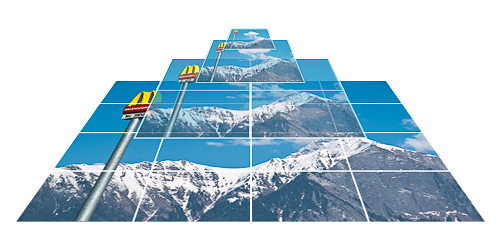

Deep Zoom, or for that matter any other similar technology such as Zoomorama, Zoomify, Google Maps etc., uses something called an image pyramid as a basic building block for displaying large images in an efficient way:

The picture above illustrates the layout of such of an image pyramid. The two purposes of a typical image pyramid are to store an image of any size at many different resolutions (hence the term multi-scale) as well as these different resolutions sliced up in many parts, referred to as tiles.

Because the pyramid stores the original image (redundantly) at different resolutions we can display the resolution that is closest to the one we need and in a case where not the entire image fits on our screen, only the parts of the image (tiles) that are actually visible. Setting the parameter values for our pyramid such as number of levels and tile size allows us to control the required data transfer.

Image pyramids are the result of a space vs. bandwidth trade-off, often found in computer science. The image pyramid obviously has a bigger file size than its single image counterpart (for finding out how much exactly, be sure to come back for part two) but as you see in the illustration below, regarding bandwidth it's much more efficient at displaying high-resolution images where most parts of the image are typically not visible anyway (grey area):

As you can see in the picture above, there is still more data loaded (colored area) than absolutely necessary to display everything that is visible on the screen. This is where the image pyramid parameters I mentioned before come into play: Tile size and number of levels determine the relationship between amount of storage, number of network connections and bandwidth required for displaying high-resolution images.

Next

Well, this was it for part one of Inside Deep Zoom. I hope you enjoyed this short introduction to image pyramids & multi-scale imaging. If you want to find out more, as usual, I've collected some links in the Further Reading section. Other than that, be sure to come back, as the next part of this series – part two – will discuss the characteristics of the Deep Zoom image pyramid and I will show you some of the mathematics behind it.

Further Reading

- Wikipedia: Pyramid (image processing)

- Wikipedia: Gaussian Pyramid

- Wikipedia: Mipmap

- Paper: Pyramid Methods in Image Processing (PDF)

- Video: Seadragon Tech Demo (prior to Microsoft acquisition)